Robustness Evaluation

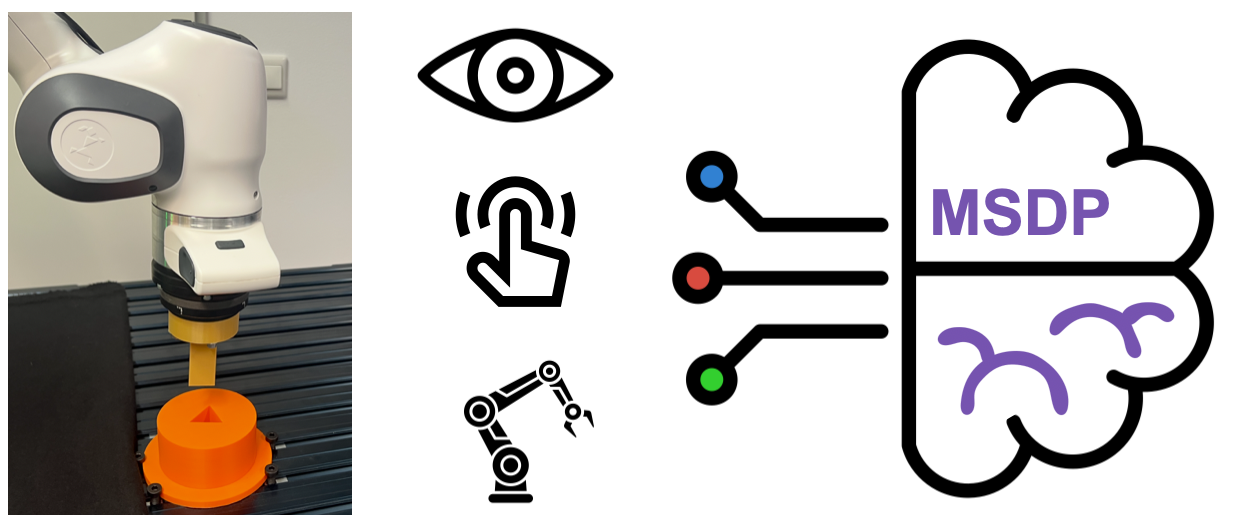

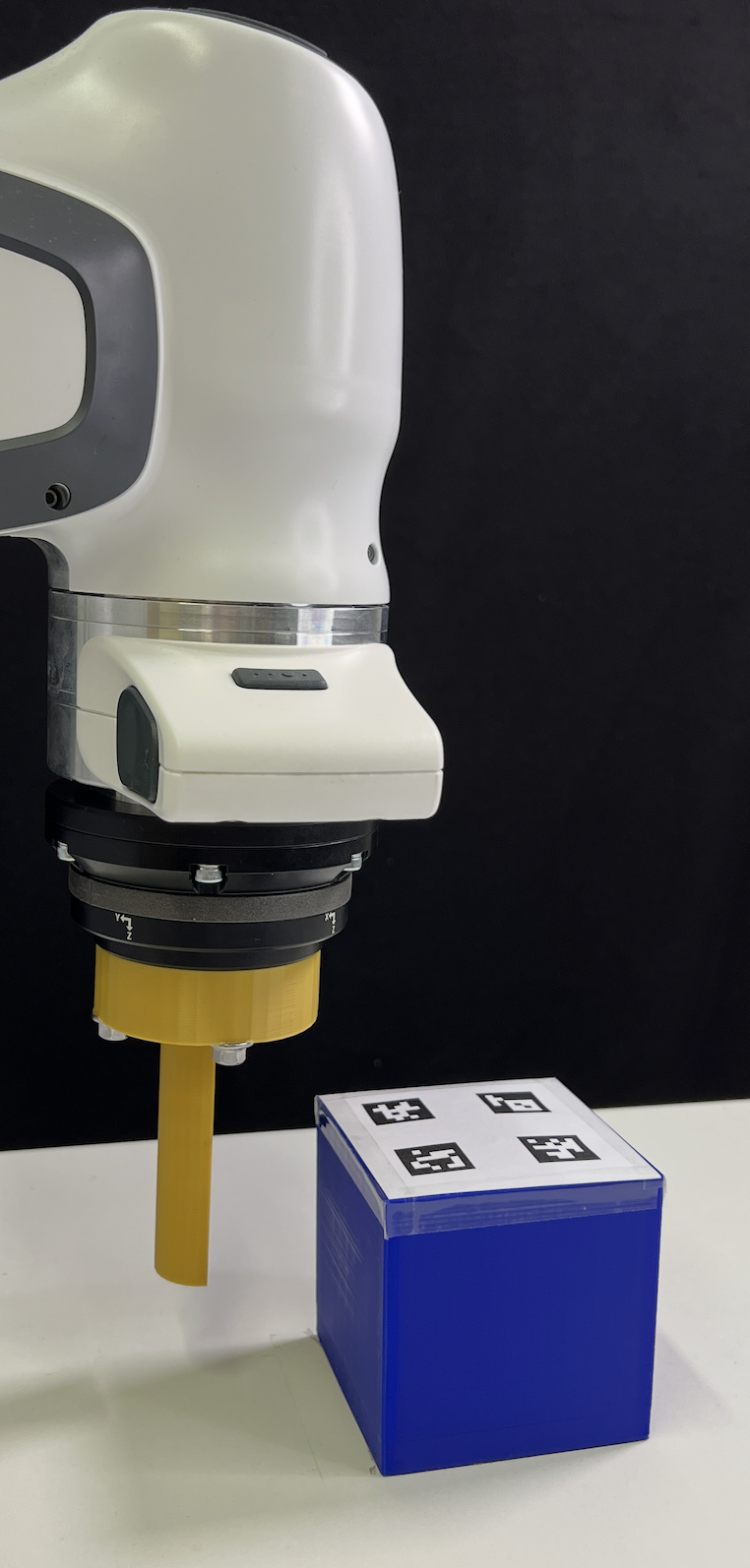

We evaluate the final policy of MSDP-P in the Peg Insertion task under various disturbances to showcase the robustness of our pretrained multisensory encoder and policy. We evaluate each change that hasn't been observed during training for 20 trials. Trained on \(K_c\)=2000 cartesian-stiffness the policy achieves 90 % success rate with decreased (\(K_C\)=1500) and 100 % with increased cartesian-stiffness (\(K_c\)=2500). MSDP shows remarkable robustness against changed light settings, e.g., back light (100 %), front light (100 %), disco lights (100 %) and visual occlusion (partly blocked camera view, 95 %) and external forces.